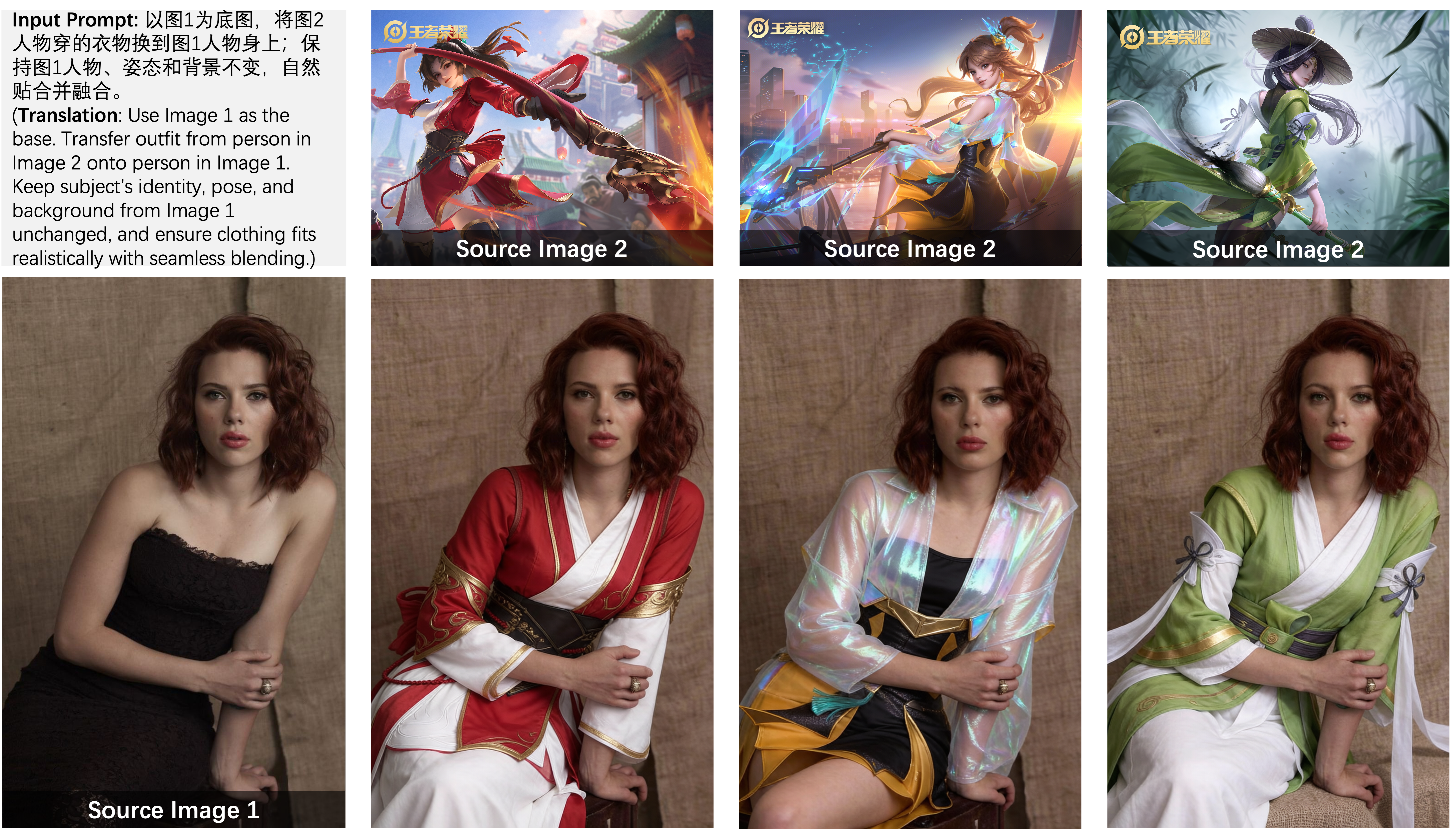

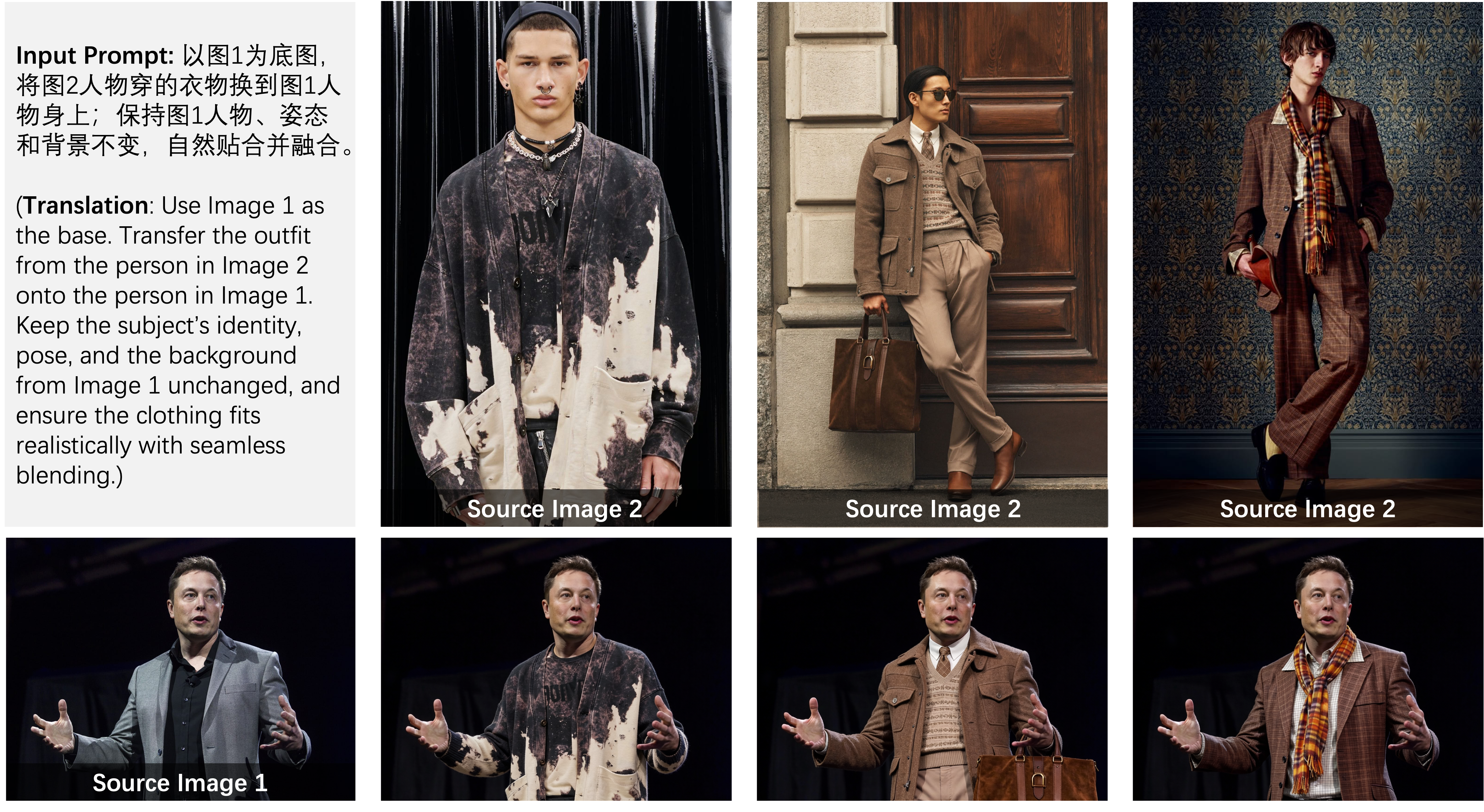

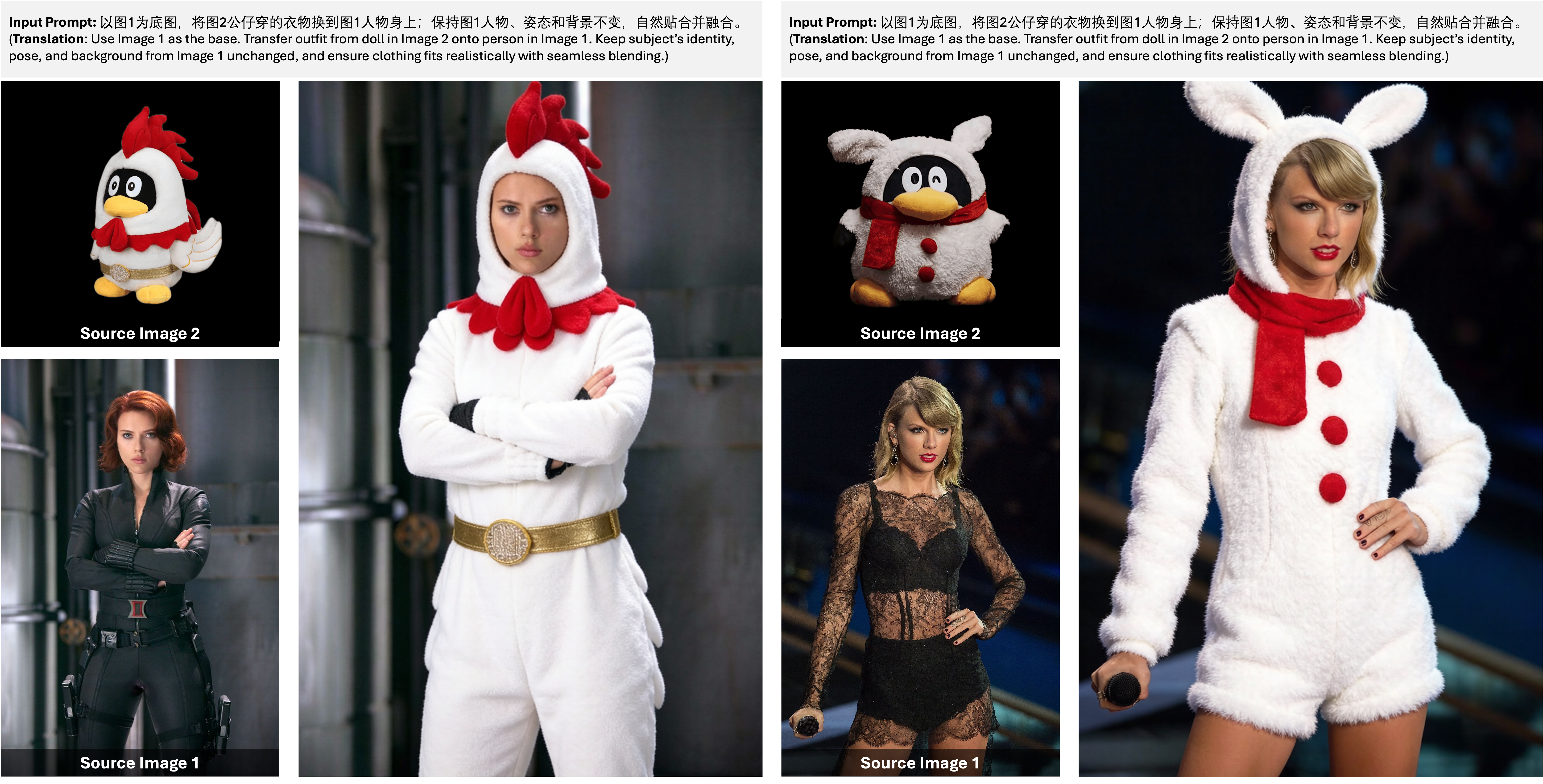

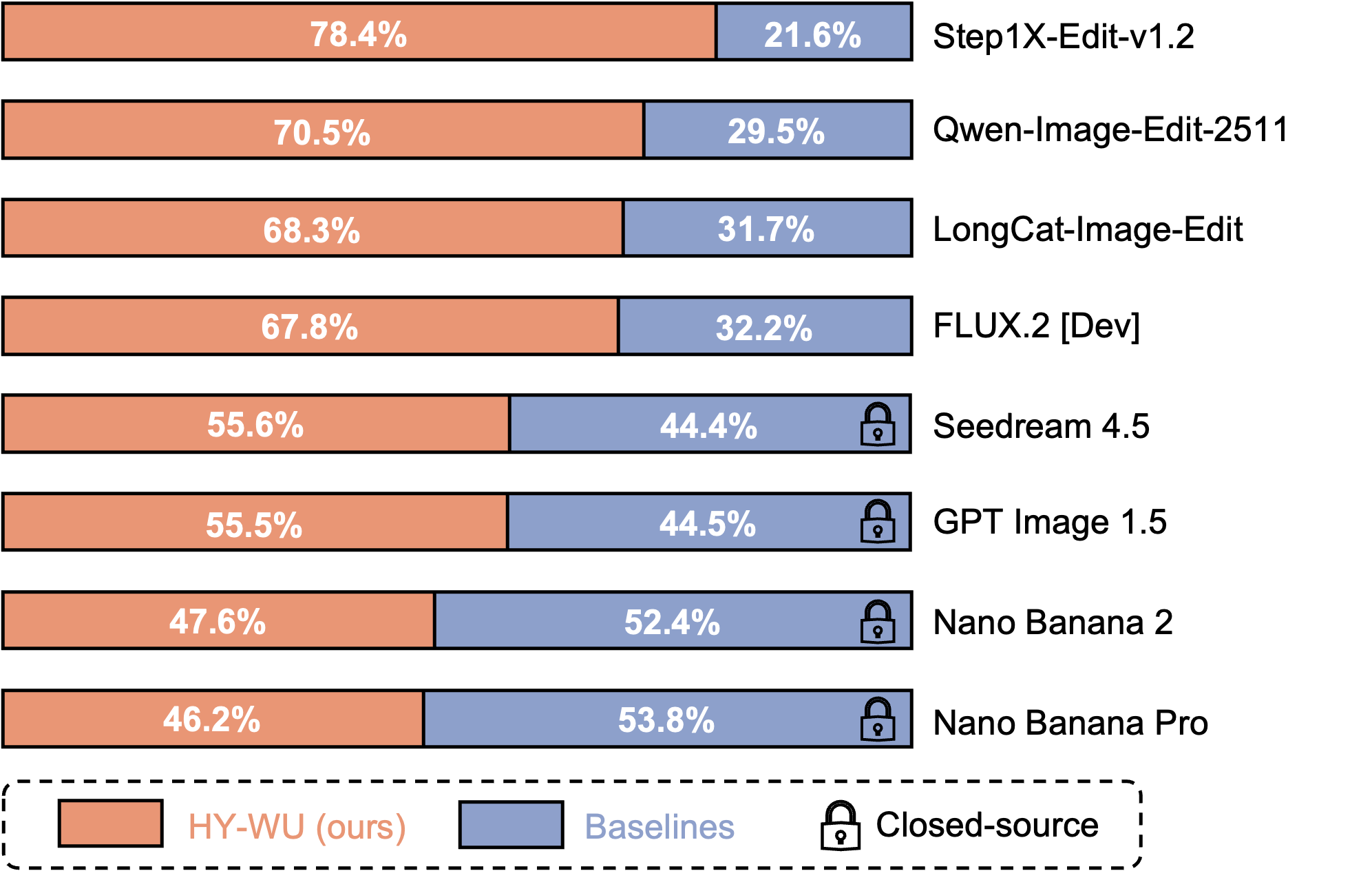

We propose HY-WU: a scalable framework for on-the-fly conditional generation of low-rank (LoRA) updates. HY-WU synthesizes instance-conditioned adapter weights from hybrid image–instruction representations and injects them into a frozen backbone during the forward pass, producing instance-specific operators without test-time optimization.